15 - 21 October compared with Cloudstore 1 - 7 October

When creating the Digital Marketplace we did a lot of user research to understand our buyers, and used the insights to inform our design decisions. Now that the Digital Marketplace is in public beta we can validate our design decisions with analytics, and find new user insights to inform service improvements.

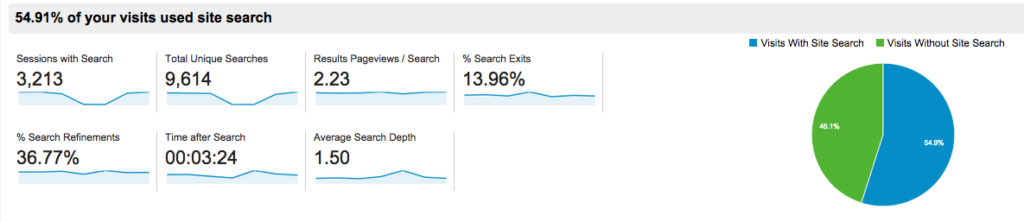

For example, we found that people wanted better search functionality than CloudStore provided, so we prioritised search in the user interface and (hopefully) improved the algorithm. The data below shows that more people are searching, and early indications suggest they’re using the search function as intended.

We only compared one week’s worth of data from CloudStore with a week from the Digital Marketplace, so we don’t have any definite conclusions. But it gives us a starting point, and helps us articulate questions that we can ask of the site when we have more data.

We switched off CloudStore on October 14, and redirected traffic to the Digital Marketplace. We published minimal comms about the switch-off, which made it easier to get a natural view of buyer usage, without artificial, exploratory behaviour.

Same amount of contacts about service

(Excludes supplier queries about differences between CloudStore and Digital Marketplace)

On our helpdesk, there were a similar number of service-related queries from buyers. Due to variables (one of them being that the email link text was completely different) we don't want to get too hung up on exact comparisons. But it's enough to say that a similar amount of contacts is positive. We'd expect an unfamiliar service to generate a spike in queries as people struggled with the new interface, but this hasn't happened.

People who had problems using the Digital Marketplace were usually struggling to generate a manageable list of results - either returning too many services or too few. We'll look further into feedback and analytics to find out how to support buyers further.

On the other side, here's a positive comment from one of our users:

“I’ve just run the same search (Accessibility Testing) on both the CloudStore and the Marketplace and I’ve found the Marketplace to be infinitely less confusing! My results were much more closely matched to my search and did not seem to rely on me understanding the difference between the various types of service.”

Buyers starting their journey

We re-directed Cloudstore traffic to a start page on GOV.UK. There are signals that people aren’t clicking through from the GOV.UK start page to the Digital Marketplace because they’re getting confused. 78% of people clicked through, but some of the 22% who stayed on GOV.UK tried to search for services (which they won’t find on GOV.UK), or went back to the page they came from. Some confusion is expected with a new service, but we’ll keep an eye on this to make sure a higher percentage of people click through.

In terms of the Digital Marketplace service itself - around half of people started their journey on the homepage. A small percentage of people left after viewing just the homepage (bounced) - only 13% as opposed to 32% on Cloudstore, which had a notoriously confusing interface. This is good because it suggests that fewer people are landing on the site in error, and / or more people are seeing a route to what they need.

Understanding if search is better

Digital Marketplace has been designed to facilitate search, and 55% of people used keywords to search, as opposed to 29% on CloudStore. And there are other indications that people are searching as we intended. Only 14% exit immediately after searching (down from 24% on CloudStore), which suggests fewer people are giving up. We're also finding an increase in search refinements (people searching more than once), and phrases with three or more words, which suggests that people are engaging in the sophisticated search behaviour we've tried to facilitate. We’ll need to do further analysis to see whether people are getting useful results from this behaviour.

Usage of service pages

Almost a third of people started their visits on service pages. We assume that most of them have been through the initial bit of the service-selection process, and are returning to get more information about the services in their shortlist. But it may be that they've been given the link to a service directly by a supplier, or found it directly in Google. We can now segment this service-focussed audience to learn more about them.

One of the main aims of the service is getting more people to more services, to increase competition and ultimately sales. So we'll be monitoring this further, and acting on user insight from Google Analytics and user feedback to achieve this.

So far, we’ve got a few useful indications of user behaviour to go on. We’re interrogating the data further to learn more about their needs, and work out what to improve and how we’ll benchmark this. Alongside this we’re working out what overall success looks like, pinning down the most insightful measures that will show us whether people are getting what they need, and if not point us to the reason why.

2 comments

Comment by David Dinsdale posted on

It would be great if Cabinet Office published the data for products that are listed on the Digital Marketplace as part of it's open data initiative. Is that likely?

Comment by Raphaelle Heaf posted on

We are looking further about opening up data for the Digital Marketplace and have started by producing a performance dashboard here <a href="https://www.gov.uk/performance/digital-marketplace" rel="nofollow">https://www.gov.uk/performance/digital-marketplace</a>.

This will continue to be expanded and iterated as the Digital Marketplace is developed.