Since the ‘earliest usable product’ of the Digital Outcomes and Specialists buying journey went live at the end of April, over 110 opportunities for work in the public sector have been published on the Digital Marketplace. These are made up of:

- 26% digital outcomes opportunities

- 69% digital specialists opportunities

- 5% user research opportunities

We always said we’d iterate, so here’s how we’re doing it.

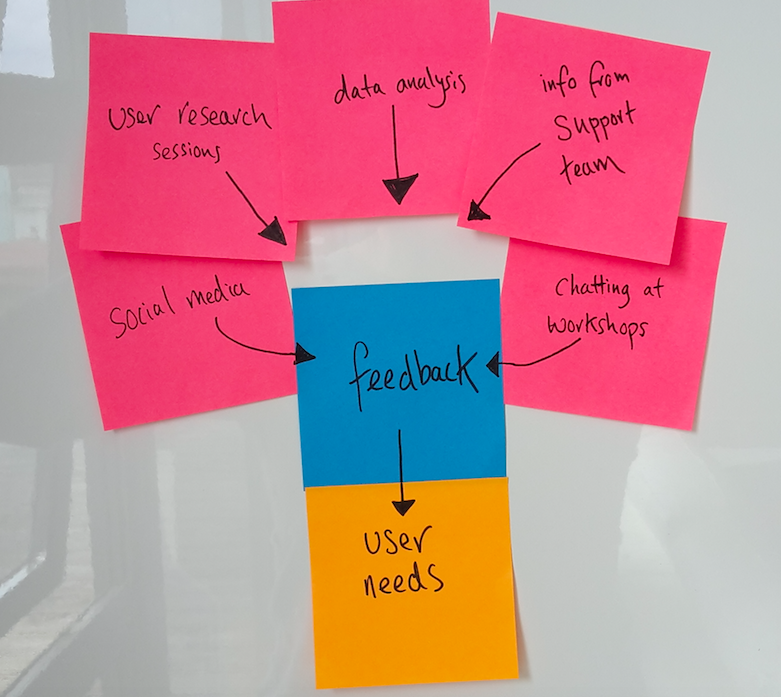

We’re listening to users

We’ve been listening to feedback from buyers and suppliers to find out how they’ve found the buying process. We’ve been collecting data from:

- buyers and suppliers who’ve contacted our Digital Marketplace support team or the Crown Commercial Service

- social media

- workshops and demonstrations aimed at helping buyers understand the new buying process

- user research sessions in our labs

- contextual research sessions in users’ offices

- data analysis of how buyers and suppliers are using the Digital Marketplace

Start with user needs

We started with the first GDS design principle: start with user needs, and reviewed user feedback to understand:

- the things users want to be able to do

- the common problems users were having

- the things users struggle to understand

We grouped feedback by theme eg, evaluation criteria, and used the information users provided to identify the underlying problems they were having. We kept in mind that what users ask for isn’t always what they need. This helped us to create a long list of user needs.

Prioritising user needs

To decide which area to iterate on first, the whole delivery team got together to look at the list of user needs we’d found so far. We decided how to prioritise each one based on:

- the impact it would have on the user if we addressed the need, eg, high, medium or low

- how well we understood the need, eg, not at all and we’d need to do a mini-discovery, or, really well and we wouldn’t need to do further user research

We used these criteria to put all the needs on a matrix. The buyer needs are on pink post-it notes and the supplier needs are on orange.

We’ll continue to review this board every 2 weeks to consider any new user needs we’ve identified since the last review.

Prioritising work

We used the prioritised matrix to identify the user needs we’d work on first. We had to balance the well understood needs which the delivery team would be able to build and release quickly with the less well understood needs which the user research and design team could work on in parallel. This gave us a prioritised list of things to work on: a ‘product backlog’.

We picked up the needs at the top of the list first and started to work through them. Every piece of work starts with a ‘product kickoff’ meeting where we identify the detailed user needs and any constraints and limitations we need to consider.

What we’ve been working on

The top priority user needs we’ve worked on so far include:

- As a Digital Services 2 buyer, I can’t log in with my existing account details

- As a buyer, I can’t see details of my question and answer session once I’ve published my requirements

- As a buyer, I don’t understand how evaluation criteria are weighted and used to evaluate suppliers

- As a supplier, I don’t know how the buying process works for me

Measure and learn

Now we’ve made changes to the design, we need to make sure that we’ve actually solved the user’s problem. We're measuring whether the work we’ve done has done this in lots of different ways. We're asking ourselves if:

- users are still asking customer support the same question

- users are still raising this as a problem in user research sessions

- the problems buyers were having when writing their opportunities are still there in new opportunities which are published after we’ve made a change

As we learn more about how users are using Digital Outcomes and Specialists, we'll blog about the changes we make and why we've made them.